The Commission revealed its much-anticipated, first ever proposal for a legal framework on AI

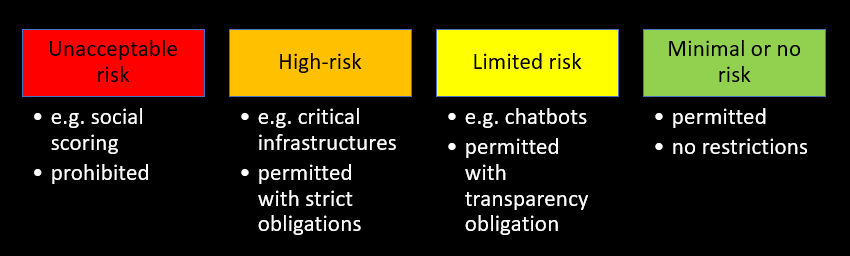

The proposal, called the ‘Regulation laying down harmonised rules on artificial intelligence (Artificial Intelligence Act)’, is first of its kind. There has not been a specific, harmonised legislation regulating the provision or the use of AI in the European Union (EU) before. The EU has chosen to take a risk-based approach, meaning that all AI systems have been categorised from unacceptable risk to minimal or no risk at all. The aim of the regulation is to cover all AI systems, both present and future, which is why the definition of AI is so general within the draft proposal, so that technology that does not yet exists, will fall under the regulation when it is created.

The European Commission aims to turn the EU into the ‘global hub for trustworthy AI’ by setting the world’s highest standards for artificial intelligence and developing ‘human-centric, sustainable and secure’ AI. The EU says it wants to set strict rules on AI systems in order to protect the safety and fundamental rights of its citizens as well as businesses operating in the EU area, while driving innovation and mainstream adoption of the technologies. The EU states that the new rules will also increase the users’ trust in AI systems.

Since the Commission’s plan it to create unified rules covering the whole EU zone, it has decided that the rules shall be implemented as a regulation, so that the new rules will be applied in the same way in all member states.

What are the risks related to AI systems?

The European Commission has proposed the following classification for AI solutions when they are used in certain industries:

Unacceptable risk

AI systems that pose a significant threat to the safety, livelihoods and rights of people will be prohibited under the proposed regulation. Those include AI systems that exploit people’s vulnerabilities or try to manipulate the users’ free will. In addition, systems providing social scoring of people by public authorities bear an unacceptable risk, as such systems a pose threat to equality by possibly leading to discriminatory outcomes.

High-risk

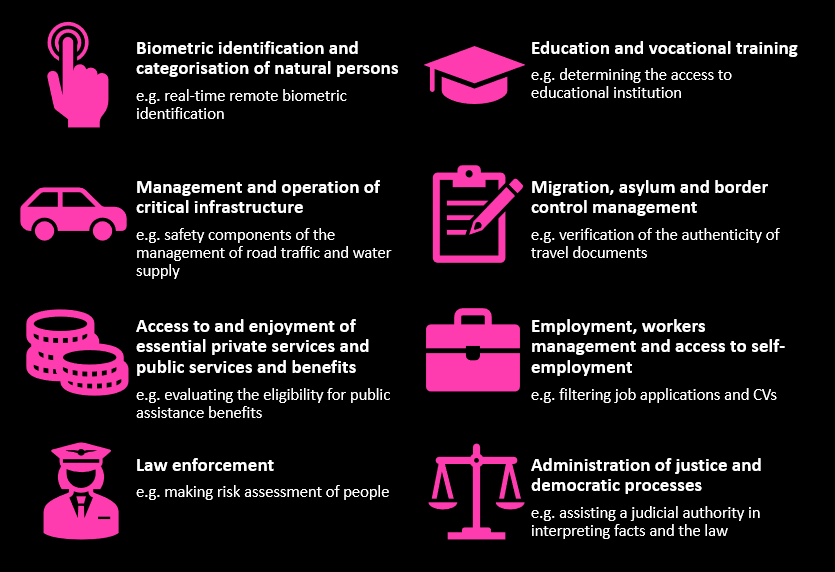

High-risk AI, as listed in the Annex III of the regulation, includes AI systems used in:

The Commission has reserved the right to update the list in the Annex if it deems necessary.

The regulation sets certain strict requirements for the high-risk AI systems, which companies would need to comply with in order to provider their AI system on the EU market:

- Establishing a risk assessment and mitigation system

- Training the system with high quality data to minimise risks and discriminatory outcomes

- Logging the activities of the AI system

- Drawing up and keeping up-to-date and detailed documentation regarding the system

- Ensuring sufficient transparency and information to users

- Taking appropriate human oversight measures to minimise risk

- Achieving an appropriate level of robustness, security and accuracy

Limited risk

This category includes AI systems with specific transparency obligation. Companies which provide AI systems that fall under the limited risk category, will have to ensure that the users are aware that they are interacting with an AI system. This will need to be done by informing users separately, unless it is obvious from the circumstances that the users should understand that they are interacting with an AI system. This means for example chatbots and other bots imitating humans.

In addition, so-called deepfakes (e.g. AI generated videos of celebrities/politicians/others, that are fake but appear to be real) should be labelled as manipulated or artificially generated.

Minimal or no risk

Most AI systems pose no threat to the rights and safety of people and thus fall into this category. AI solutions with only a minimal risk or no risk at all can be utilized freely. These include, for example, video game AI and spam filters.

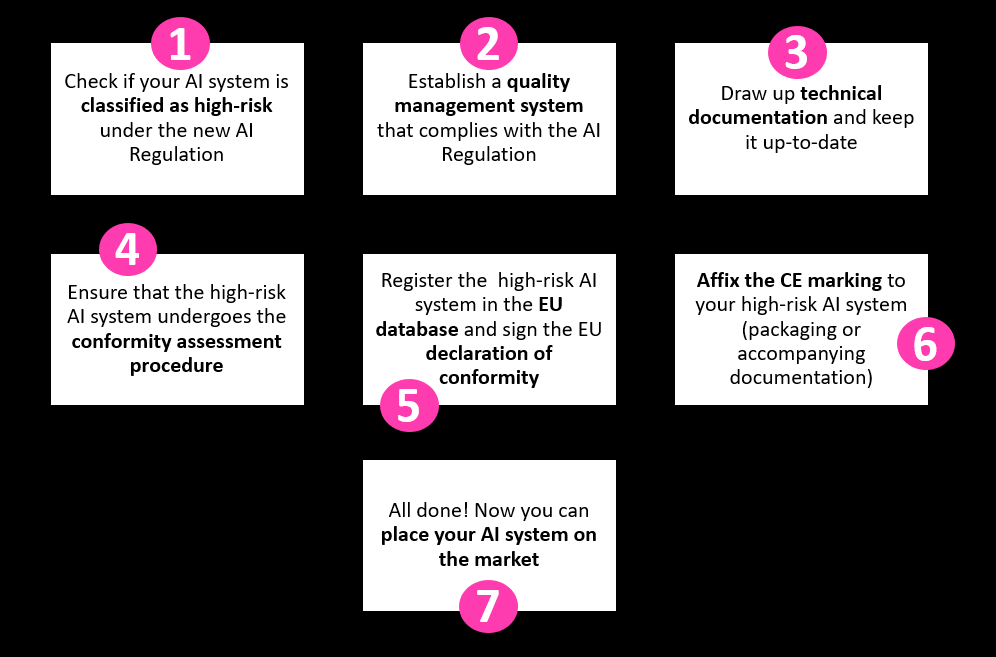

Obligations related to the development and use of high-risk AI systems

Providers of high-risk AI systems also need to fulfil certain obligations to ensure that their product can be put on the market, so companies dealing with such solutions should pay attention to the requirements of the regulation in the future. Below is the proposed process for AI providers:

One significant change is that providers of high-risk AI solutions must obtain a CE marking for their technology. The CE marking is an indication that a product has been assessed and meets the requirements of a relevant Union legislation regulating the product in question.

How will the changes affect startups?

For many companies, the new regulation may not change much. Game studios can develop their games as usual (that is, if they are not trying to exploit or manipulate players, which will be banned) and companies can still use chatbots to communicate with their clients.

However, companies developing AI systems that will be classified as high-risk after the regulation enters into force need to pay close attention to the requirements and obligations. As in the GDPR, non-compliance with the requirements or obligations will be subject to administrative fines of up to 20 000 000 EUR or 4 % of the companies total annual turnover for the preceding financial year, whichever is higher.

Complying with all the requirements can be costly, as companies may need to hire outside experts to ensure compliance. In addition, obtaining the mandatory CE marking for high-risk AI solution may not be cheap, and the price may vary depending on features of the AI system.

Should the regulation be implemented in the current proposed way, this may cause a significant increase in costs for both startups and large tech companies alike. Some critics of the regulation have stated that the regulation may hamper innovation and drive companies outside the EU, if AI product development becomes impossible due to excessive costs.

However, it is also possible that the new regulation will actually help companies that are operating in the EU to raise funding and increase sales with due to being branded as trustworthy providers once the systems have been registered and received the CE markings. This is due to the fact that this may increase market and mainstream adoption of AI as consumers may more quickly trust systems that have gone through a rigorous audit and have oversight. In addition, the CE marking can be an asset when taking the AI products to markets outside the EU, because it guarantees that the AI solution is ethical and secure, and this has been proven by a very careful examination.

Conclusion

As the EU does not yet have legislation on artificial intelligence, it was natural that regulation of AI was considered necessary. The European approach emphasises the values of the EU and the aim of the regulation is clearly to brand the EU’s AI as the most trustworthy and ethical. The EU’s approach differs from that of the US and China, where the regulation is much less strict.

The downsides of the proposed regulation are the possible increases in costs and bureaucracy, which can be challenging although the Commission did make mention of assistance and possible funding to companies in the sector, to ensure that EU AI companies remain competitive.

As the released proposal is the first of its kind, there may be quite a lot of discussion ahead and it will take some time for the regulation to take its final form and be implemented. During the next years it is advisable to follow the discussion quite closely to see what will be the final framework that will be implemented. Artificial intelligence systems are useful technology that is already transforming how we conduct our daily lives. Regulations bring more trust into the industries that are being regulated but this should not be at the cost of innovation. Thus, instead of regulating the development of artificial intelligence systems, it may be more worthwhile to regulate its use, and only apply stringent requirements when the AI system is being utilized in a high-risk industry i.e. health care. It remains to be seen what the outcome will be and how long the regulatory process will take, but one thing remains a fact, AI systems will be regulated, for better or for worse, within the next few years.

Read more

- the Proposal for a Regulation on a European approach for Artificial Intelligence

- an updated Coordinated Plan with Member States

- the Proposal for a Regulation on machinery products

Text and additional information:

Juuso Turtiainen, Associate, +358 40 764 8910, [email protected]

Anni Kaarento, Legal Trainee, [email protected]